Bot Traffic in 2026: The Silent Killer of Your Marketing ROI

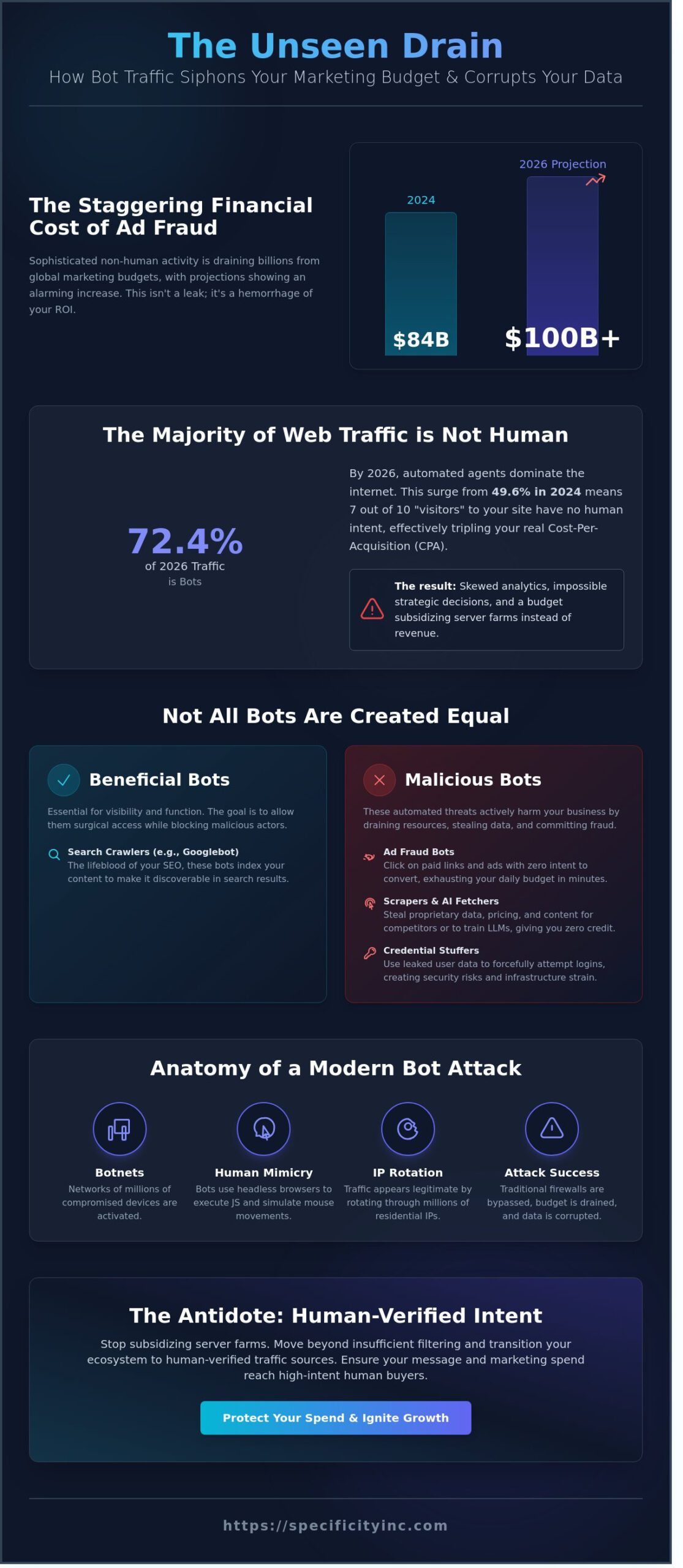

The 2024 Juniper Research report confirmed that ad fraud, fueled primarily by bot traffic, siphoned $84 billion from global marketing budgets. By 2026, sophisticated non-human activity is projected to drain over $100 billion. You likely see the symptoms in your dashboard: high click-through rates that lead to zero conversions and skewed analytics that make strategic decisions impossible. It’s a mathematical certainty that if you’re relying on broad-stroke digital tactics, you’re subsidizing a server farm instead of scaling your revenue.

You recognize that vanity metrics are a liability. You need data that reflects human intent, not automated scripts. This article provides the surgical precision required to identify, neutralize, and bypass bot traffic entirely. I’ll show you how to ensure your marketing spend reaches high-intent human buyers by implementing a framework to eliminate ad fraud at the source. We’re moving beyond guesswork; we’re transitioning your entire ecosystem to human-verified traffic sources to protect your ROI and ignite predictable growth.

Key Takeaways

- Identify the sophisticated 2026 landscape where non-human interactions now dominate the majority of total web traffic.

- Expose the mechanisms of ad fraud and analytical pollution that allow bot traffic to siphon your marketing budget and distort conversion data.

- Categorize evolving threats like AI fetchers and scrapers to protect your proprietary content from being siphoned for zero-click answers.

- Deploy advanced detection strategies that move beyond insufficient GA4 filtering to neutralize high-bounce anomalies with surgical precision.

- Transition to human-verified intent data to bypass the chaotic open web and ensure your message reaches high-intent buyers.

What is Bot Traffic? Defining the Non-Human Landscape in 2026

Your marketing data is likely a lie. If you aren’t filtering for the non-human visits that now dominate the digital ecosystem, your ROI is a vanity metric. To define What is Bot Traffic? in the current year, we must look at any automated request to a web server that originates from a software application rather than a human user. By mid-2026, bot traffic accounts for 72.4% of all internet activity, a staggering increase from the 49.6% baseline established in 2024. This surge is driven by the industrialization of AI fetchers and automated agents that scan, scrape, and interact with your assets at a frequency humans can’t replicate.

The 2026 landscape is defined by these automated agents. They don’t just browse; they consume. AI fetchers now work on behalf of Large Language Models (LLMs) to ingest real-time data, often bypassing traditional attribution models. This creates a massive gap in your conversion optimization strategy. If 7 out of 10 visits to your site lack human intent, your cost-per-acquisition (CPA) is effectively tripled unless you apply extreme granularity to your traffic analysis. You must move past the idea that every “hit” represents a potential customer.

The Anatomy of a Bot Attack

Modern botnets are sophisticated networks of millions of compromised devices, ranging from smart appliances to industrial sensors. These scripts don’t just ping a server; they simulate human behavior with terrifying precision. They use headless browsers to execute JavaScript, mimic erratic mouse movements, and vary their dwell times to appear legitimate. Traditional firewalls fail because these bots don’t look like malicious code; they look like a qualified lead from a residential IP in Chicago. They bypass security layers by rotating through millions of unique addresses, making it impossible to block them using simple blacklists. You’re no longer fighting simple scripts; you’re fighting distributed AI systems designed to exploit your infrastructure.

Good vs. Bad: Not All Bots Are Created Equal

Strategic dominance in the B2B space requires a scalpel, not a sledgehammer. You can’t simply block all automation without destroying your SEO visibility. Beneficial bots like Googlebot and Bingbot are the lifeblood of your search rankings. However, they share the wire with malicious actors that threaten your scalability. Identifying the difference requires pinpoint accuracy:

- Search Crawlers: Essential “good bots” that index your content for visibility.

- Scrapers: Aggressive agents that steal your proprietary data and pricing structures to give competitors an edge.

- Credential Stuffers: Malicious scripts that use leaked data to force their way into user accounts.

- Ad Fraud Bots: These agents ignite your ad spend by clicking on paid links with zero intent to convert, draining your budget in minutes.

The goal is to maintain surgical access for helpful crawlers while implementing intent-based targeting filters that isolate and neutralize the fraudulent ones. If you don’t dominate this distinction, the noise will eventually drown out your actual revenue.

The Economic Impact: How Bot Traffic Siphons Your Marketing Budget

Your marketing budget is under siege. Every dollar allocated to broad-reach digital campaigns is a target for sophisticated scripts designed to mimic human behavior. This isn’t a minor leakage; it’s a systemic drain. When bot traffic infiltrates your funnel, it doesn’t just skew numbers. It steals capital. Automated scripts engage with programmatic ads at scale, exhausting daily budgets by 10:00 AM before a single qualified human prospect has even seen your offer. In 2023, ad fraud was estimated to cost advertisers $84 billion globally, and that figure is climbing as botnets become more autonomous.

The damage extends to your hidden infrastructure costs. Serving pages to non-converting bots costs real money in server bandwidth, processing power, and API calls. If 30% of your hits are non-human, you’re paying a 30% premium on hosting and cloud maintenance for zero return. This creates a cycle of brand erosion where vanity metrics like “page views” and “sessions” climb while actual revenue stagnates. It’s a facade of growth that masks a decaying bottom line. You can’t scale a business on ghost impressions.

Programmatic Display and the Bot Trap

Open-exchange programmatic display is the primary hunting ground for sophisticated botnets. Broad targeting parameters are a magnet for fraud because they prioritize volume over intent. Without granular control, your ads appear on low-quality sites where bots generate fake impressions and clicks to collect a payout. This waste decimates your Digital Advertising ROI and rewards the very entities stealing from you. Precision isn’t just a preference; it’s the only defense against this automated theft.

Data Inaccuracy and Strategic Failure

Decisions made on bad data are worse than decisions made on no data. To protect your strategy, you must first understand What is bot traffic and how it pollutes your entire ecosystem. When bot traffic triggers events in your A/B tests, your winning variations are determined by algorithms, not customers. This “Bot Tax” leads C-suite executives to double down on failing strategies because the data looks promising on the surface.

A 2023 report from CHEQ indicated that nearly 25% of all web traffic is comprised of bad bots. If your conversion rate is 2% but 25% of your traffic is fake, your real conversion rate is actually 2.6%. You’re optimizing for a lie. Businesses that value data-driven certainty must purge these anomalies to find the signal in the noise. Failure to quantify the bot tax on your operations means you aren’t just losing money; you’re losing the ability to compete based on reality.

Categorizing the Threat: AI Fetchers, Scrapers, and Fraud Bots

Bot traffic is no longer a monolith of simple spam. In 2026, it’s a sophisticated ecosystem of automated agents designed to extract value without providing a cent of ROI. To protect your margins, you must understand the four distinct categories of bots currently cannibalizing your marketing spend. AI Fetchers act as digital vampires, siphoning real-time content to power zero-click AI answers that keep users away from your site. Scraper bots execute high-speed reconnaissance, stealing proprietary pricing and data to give competitors an unearned edge. Impression bots artificially inflate ad views, tricking your team into paying for eyeballs that don’t exist. Finally, click bots serve as the primary driver of PPC budget depletion, systematically draining your funds through fraudulent engagement.

The scale of this problem is staggering. Recent data confirms that Bot Traffic Surpasses Humans in total web volume, a milestone reached as criminal innovation outpaces legacy security measures. Precision is your only shield against this automated assault. If you aren’t identifying these actors at the granular level, you’re subsidizing your own irrelevance.

The AI Surge: 300% Growth in Fetcher Activity

The 2025-2026 period has seen a 300% explosion in AI fetcher activity. These agents don’t browse; they harvest. For B2B content marketers, this creates a lethal paradox. You produce high-value insights to drive traffic, but AI agents bypass the click entirely by delivering your answer directly within a search interface. This theft of intent-based traffic renders traditional SEO metrics useless. When AI delivers the solution without the visit, your conversion funnel dies at the source. Human-verified traffic is the only defense against this data siphoning. You must implement strict protocols to ensure your data serves prospects, not large language models looking for a free meal.

Social Media and Bot-Driven Engagement

Social platforms have become a primary theater for bot-driven deception. Fake likes, shares, and clicks are the new baseline, making vanity metrics more dangerous than ever. A high-performing ad might actually be a bot-targeted sinkhole where 40% of the engagement is non-human. Differentiating between legitimate interest and automated noise requires a ruthless commitment to data science. You can’t rely on platform-provided analytics to grade your success. To survive, brands must pivot toward Social Media Advertising in 2026, where intent-based targeting replaces broad, bot-heavy outreach. Stop paying for bot engagement and start demanding verified human conversion. Logic dictates that any marketing spend not protected by bot-mitigation technology is simply lost capital.

Beyond Basic Filtering: Strategies to Identify and Neutralize Bots

Google Analytics 4 (GA4) provides a baseline for security, but relying on its native filtering is a strategic failure. Standard tools identify known bots using outdated databases. Modern bot traffic is sophisticated. It utilizes headless browsers and residential proxies to mimic human signatures. You cannot win a high-stakes digital war with a screen door. Precision requires moving beyond client-side scripts and analyzing the raw data at the server level.

Identify anomalies by examining session quality with surgical precision. A 100% bounce rate coupled with a 0:00 session duration is a definitive red flag. In 2025, industry data revealed that 42% of non-human traffic successfully bypassed JavaScript-based detection by executing only the initial request. You must deploy server-side detection to catch these ghosts. Behavioral analysis is your primary weapon. Humans don’t move in straight lines. They don’t click 50 links in three seconds. If the interaction patterns lack the chaotic variability of human biology, it’s a machine.

Aggressive defense involves tarpitting. Instead of a hard block that signals your presence to a scraper, a tarpit holds the connection open. It feeds the bot data at an agonizingly slow pace. This consumes the bot’s CPU cycles and memory. It makes the cost of attacking your site higher than the potential reward. You win by making fraud unprofitable.

Advanced Detection Metrics

- Data Center Monitoring: Track traffic spikes from AWS, Azure, or Google Cloud IP ranges. Real customers browse from ISPs like Comcast or Verizon, not server racks in Northern Virginia.

- Biometric Verification: Analyze mouse movement and keystroke velocity. Bots lack the micro-fluctuations found in human motor skills.

- Log File Analysis: Use your server logs to pinpoint requests that never trigger a tracking pixel. This is where the most dangerous bots hide.

Filtering and Blocking Frameworks

Set custom alerts for traffic thresholds. If a specific region suddenly generates a 400% increase in hits without a corresponding ad spend, shut it down immediately. Stop using traditional CAPTCHAs. They frustrate 15% of your actual customers and bots can now solve them with 98% accuracy using AI. Shift to invisible behavioral challenges that verify intent without friction.

Clean data is the lifeblood of your Search Engine Marketing (SEM) efforts. If you feed bot-inflated conversion data back into Google’s algorithms, you’re training the system to find more bots. This creates a death spiral for your budget. You must sanitize your database daily to ensure your bidding strategies are based on human intent, not automated noise.

Stop letting automated fraud erode your margins. Partner with Specificity Inc. to secure your data and dominate your market with surgical precision.

The Specificity Antidote: Human-Verified Traffic and Intent-Based Precision

Broad-stroke marketing is a relic of a less sophisticated era. If you’re still buying bulk impressions on the open web, you’re effectively subsidizing bot farms. Specificity Inc. replaces hope with surgical precision. We don’t target broad demographics; we pinpoint individual human buyers based on real-time intent data. This methodology bypasses the 42% of web traffic that is currently non-human. By the time an ad serves on a CTV or programmatic display, we’ve already verified the recipient’s identity and intent. This isn’t just about avoiding bot traffic; it’s about owning the conversion path from the first touchpoint.

Precision Targeting vs. The Bot-Infested Open Web

The open web is a minefield of automated scripts and sophisticated ad-fraud loops. Traditional agencies focus on reach, but reach is a vanity metric when bots are doing the reaching. Our methodology relies on deterministic data sets that identify actual decision-makers. We track behaviors that bots can’t replicate: specific B2B research patterns, high-value content downloads, and cross-device intent signals. This level of granularity ensures your ads are seen by humans with budgets, not scripts with proxies. Our approach to B2B Marketing in 2026 centers on this strategic shift toward intent-based precision. We leverage high-fidelity data to ensure every dollar spent targets a person who is actively in-market for your solution.

Dominating with Human-Verified Traffic

The ROI difference is immediate and measurable. When you eliminate the noise found in standard programmatic auctions, your performance metrics finally reflect commercial reality. We call it the “Bot Tax” recovery. In 2025, industry estimates showed ad fraud cost brands $100 billion globally. By 2026, that figure will only escalate for those who refuse to adapt. Specificity Inc. reallocates those recovered funds into high-performing creative and aggressive demand generation. Human-verified traffic is the most critical metric in your 2026 marketing stack. It’s the baseline for every successful campaign we launch.

- Stop paying for ghost clicks and non-viewable impressions.

- Verify every impression through deterministic data and human-centric identifiers.

- Scale with confidence, knowing your audience has a pulse and a purchase requirement.

The era of plausible deniability in digital advertising is over. You can’t afford to ignore the bot traffic eating your margins. Demand transparency and human verification in every campaign. It’s time to move beyond the chaos of the open web and embrace the certainty of human-verified precision. Partner with a team that values ROI over vanity metrics. Let’s build a marketing engine that actually works.

Stop Funding the Machine and Start Driving Revenue

By 2026, bot traffic will no longer be a nuisance; it will be a systemic drain that consumes nearly 42% of global ad spend according to industry projections. Traditional broad-stroke marketing is dead. You cannot scale a business on ghost impressions or AI-generated clicks that will never sign a contract. The landscape demands surgical precision. You must pivot from passive filtering to proactive, intent-based audience targeting to protect your bottom line. Research from the Association of National Advertisers shows that ad fraud costs brands over $120 billion annually. This is a high-stakes environment where only human-verified traffic solutions provide a legitimate path to growth. Specificity Inc. delivers the granularity required to pinpoint real buyers in a sea of non-human noise. We prioritize ROI over vanity metrics. It’s time to stop subsidizing scrapers and start engaging the 1% of the market that actually matters. Your pipeline depends on the integrity of your data. Secure your competitive advantage by demanding absolute transparency in every click.

Stop wasting your budget on bots; get human-verified traffic today.

Frequently Asked Questions

Is bot traffic always bad for my website?

No, bot traffic isn’t inherently destructive; its impact depends entirely on its classification. Essential “good bots” like Googlebot facilitate search indexing, while 2024 data from Imperva shows they account for 17.3% of web activity. However, the same report identifies that malicious bots now represent 32% of all traffic. You must distinguish between the scripts that help your site and the ones that cannibalize your marketing budget.

How can I tell if my social media ads are being clicked by bots?

High click-through rates coupled with a sub-one-second time on site are definitive indicators of automated interference. If your dashboard shows a 10% CTR but a 98% bounce rate from a specific IP range, you’re likely targeted by click farms. We analyze these anomalies with granularity to identify non-human patterns. Genuine intent-based targeting produces engagement durations that automated scripts can’t replicate without sophisticated, costly emulation.

Does bot traffic affect my organic search engine rankings?

Automated scripts indirectly erode your organic rankings by polluting the user experience signals that search engines prioritize. When 40% of your sessions are non-human, your average session duration and conversion rates plummet. Google’s March 2024 Core Update uses these behavioral metrics to determine site quality. Distorted data leads to poor algorithmic assessments, causing your site to lose visibility to competitors who maintain cleaner traffic profiles.

What is the difference between a traffic bot and a botnet?

A traffic bot is a standalone script designed for a specific task; a botnet is a coordinated network of thousands of hijacked devices. While a simple bot might originate from a single server, a botnet utilizes distributed IP addresses to bypass standard firewalls. Spamhaus reports from 2023 show that modern botnets control over 1,000,000 nodes. This scale allows them to simulate diverse geographic traffic, making detection impossible without advanced, data-driven security layers.

Can I completely block all bot traffic from my site?

You can’t completely block all bot traffic without also severing your connection to search engines and critical monitoring tools. A strategic approach focuses on neutralizing the 32% of malicious traffic identified in recent 2024 industry reports. We implement surgical filtering at the edge to protect your conversion optimization efforts. This ensures your server resources support real customers rather than processing useless automated requests that offer zero ROI.

How does AI fetcher traffic differ from traditional bot traffic?

AI fetcher traffic differs from traditional automation by focusing on data ingestion for Large Language Model training rather than interaction. While traditional scripts might target ad spend, fetchers like OpenAI’s GPTBot, launched in August 2023, consume massive amounts of content to refine AI responses. This creates a unique challenge for resource management. You need to decide if the visibility within AI answers justifies the server load and content scraping these fetchers demand.

What is human-verified traffic and how do I get it?

Human-verified traffic is a data segment validated through multi-factor behavioral signals, biometrics, or authenticated login states. You secure this through advanced attribution platforms that filter out non-human patterns before they enter your sales funnel. DoubleVerify’s 2024 research confirms that verified human traffic delivers 15% higher conversion rates than unverified pools. We prioritize this granularity to ensure every dollar of your budget targets a real person with genuine intent.

How much of my marketing budget is typically lost to bot traffic?

Marketing departments lose an average of 25% of their digital ad spend to fraudulent clicks and automated interference. The Association of National Advertisers reported in 2023 that this waste accounts for $84 billion in global losses annually. This isn’t just a technical glitch; it’s a direct hit to your bottom line. By failing to scrub your traffic, you’re subsidizing criminal networks instead of acquiring customers. Precision in your traffic sourcing is the only way to reclaim this lost capital.